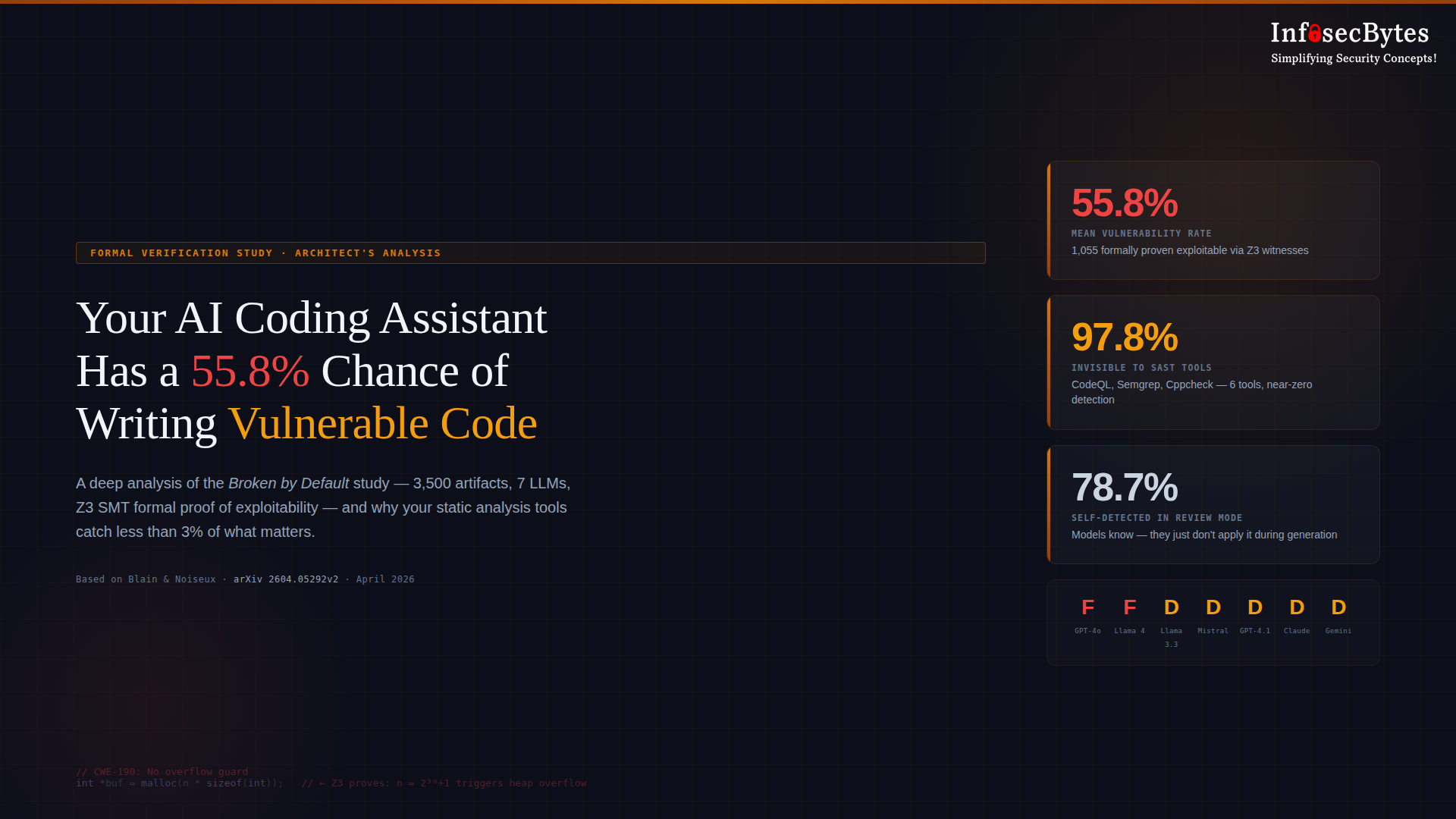

A new formal verification study — Broken by Default — used the Z3 SMT solver to mathematically prove that 55.8% of AI-generated code contains exploitable vulnerabilities. Across 3,500 artifacts from seven production LLMs, 1,055 findings were formally proven with concrete exploit inputs. The worst part? Six industry-standard static analysis tools combined — including CodeQL — missed 97.8% of them. This is a security architect’s deep analysis of what the study found, why it matters, and what security leaders need to do about it now.

Simplifying Security Concepts!