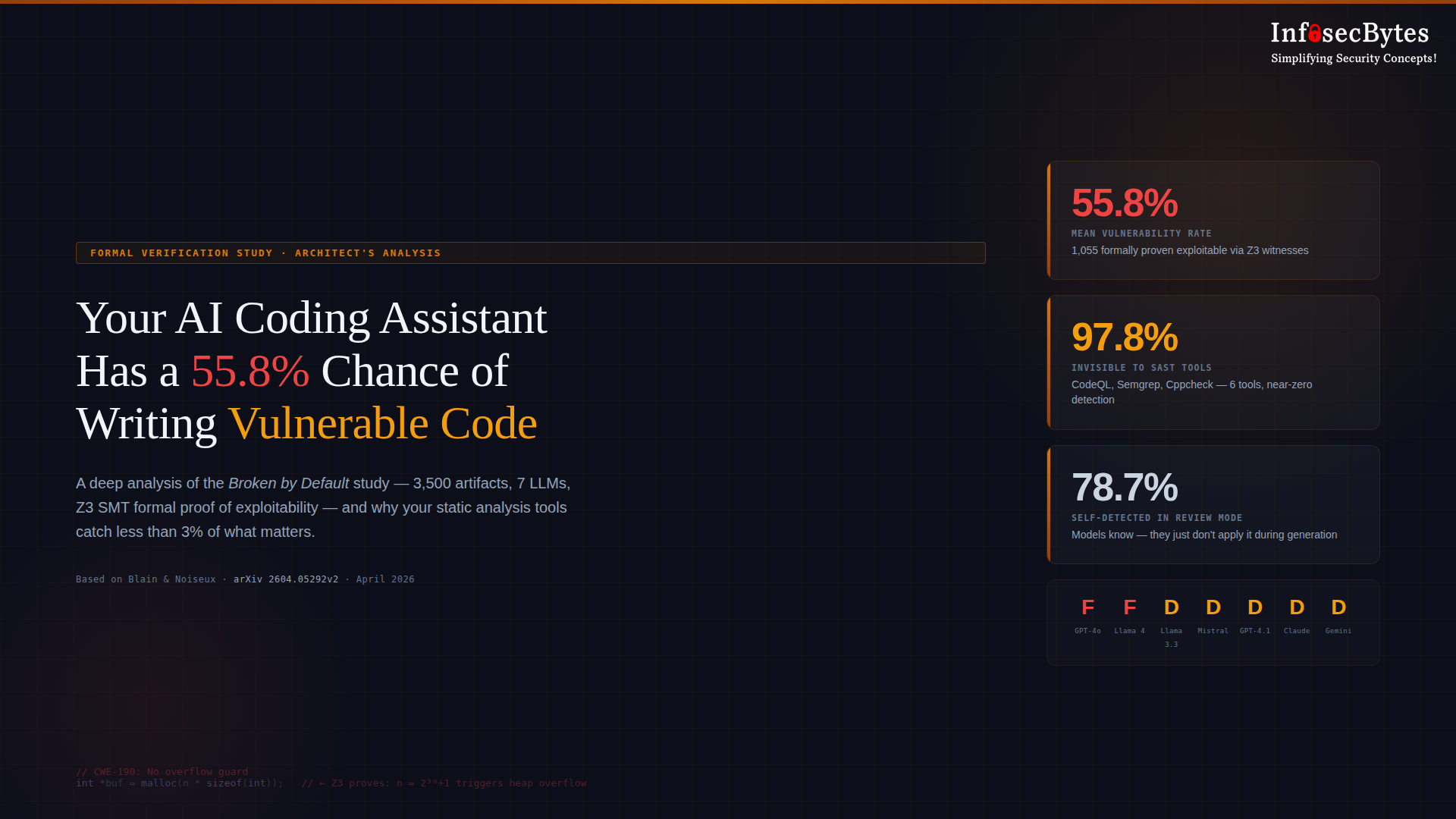

A new formal verification study — Broken by Default — used the Z3 SMT solver to mathematically prove that 55.8% of AI-generated code contains exploitable vulnerabilities. Across 3,500 artifacts from seven production LLMs, 1,055 findings were formally proven with concrete exploit inputs. The worst part? Six industry-standard static analysis tools combined — including CodeQL — missed 97.8% of them. This is a security architect’s deep analysis of what the study found, why it matters, and what security leaders need to do about it now.

The LiteLLM Supply Chain Attack: What Every AI Builder Needs to Know

On March 24, 2026, two malicious versions of LiteLLM — the Python library powering 95 million monthly downloads across the AI developer ecosystem — were quietly pushed to PyPI. The attack didn’t begin with LiteLLM. It began with Trivy, a vulnerability scanner running inside LiteLLM’s own CI pipeline without version pinning. One compromised dependency handed attackers the PyPI publishing credentials they needed. What followed was a multi-stage credential stealer that executed silently on every Python process, swept SSH keys, cloud credentials, Kubernetes tokens, and API keys, then exfiltrated everything encrypted to an attacker-controlled server. The TeamPCP campaign is still active. This is the blueprint for AI supply chain attacks going forward — and your current defenses are probably not sufficient.

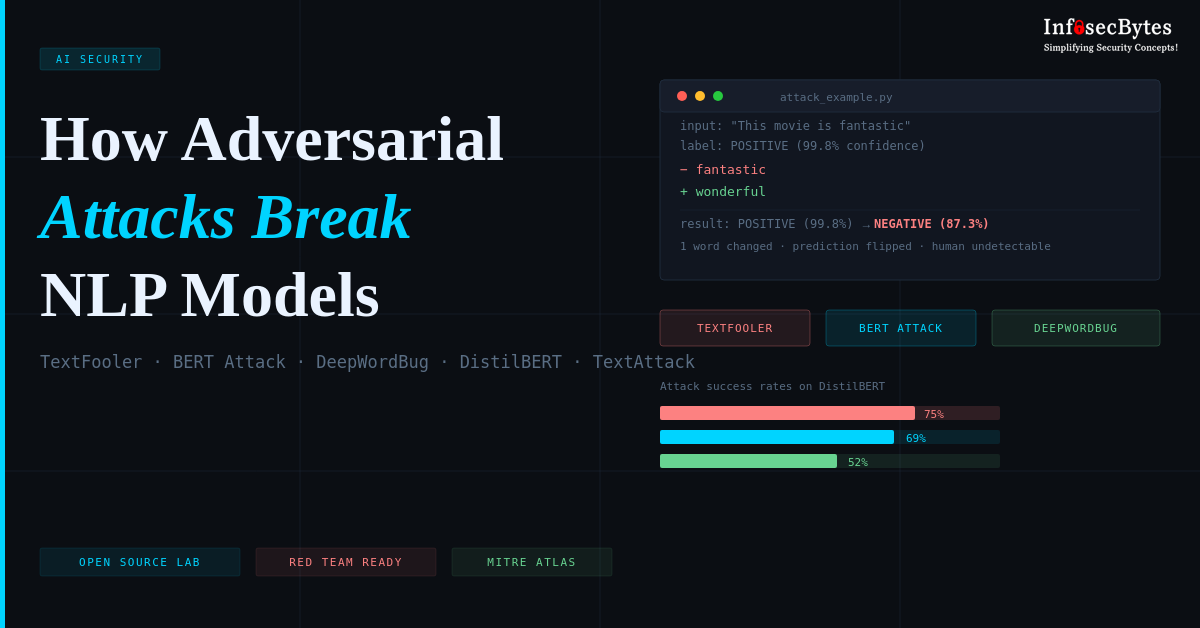

How Adversarial Attacks Break NLP Models in Production

Modern NLP models power critical systems, from content moderation to fraud detection — yet remain surprisingly fragile. A single word swap can flip a sentiment prediction from positive to negative. A single character edit can bypass spam filters. This post walks through three real-world adversarial techniques (TextFooler, BERT Attack, DeepWordBug) that achieve 52-75% success rates against production transformers, and introduces an open-source lab where you can reproduce these attacks using the TextAttack framework. Complete with Docker setup, attack metrics, and defense strategies—this is adversarial ML research you can run in 10 minutes