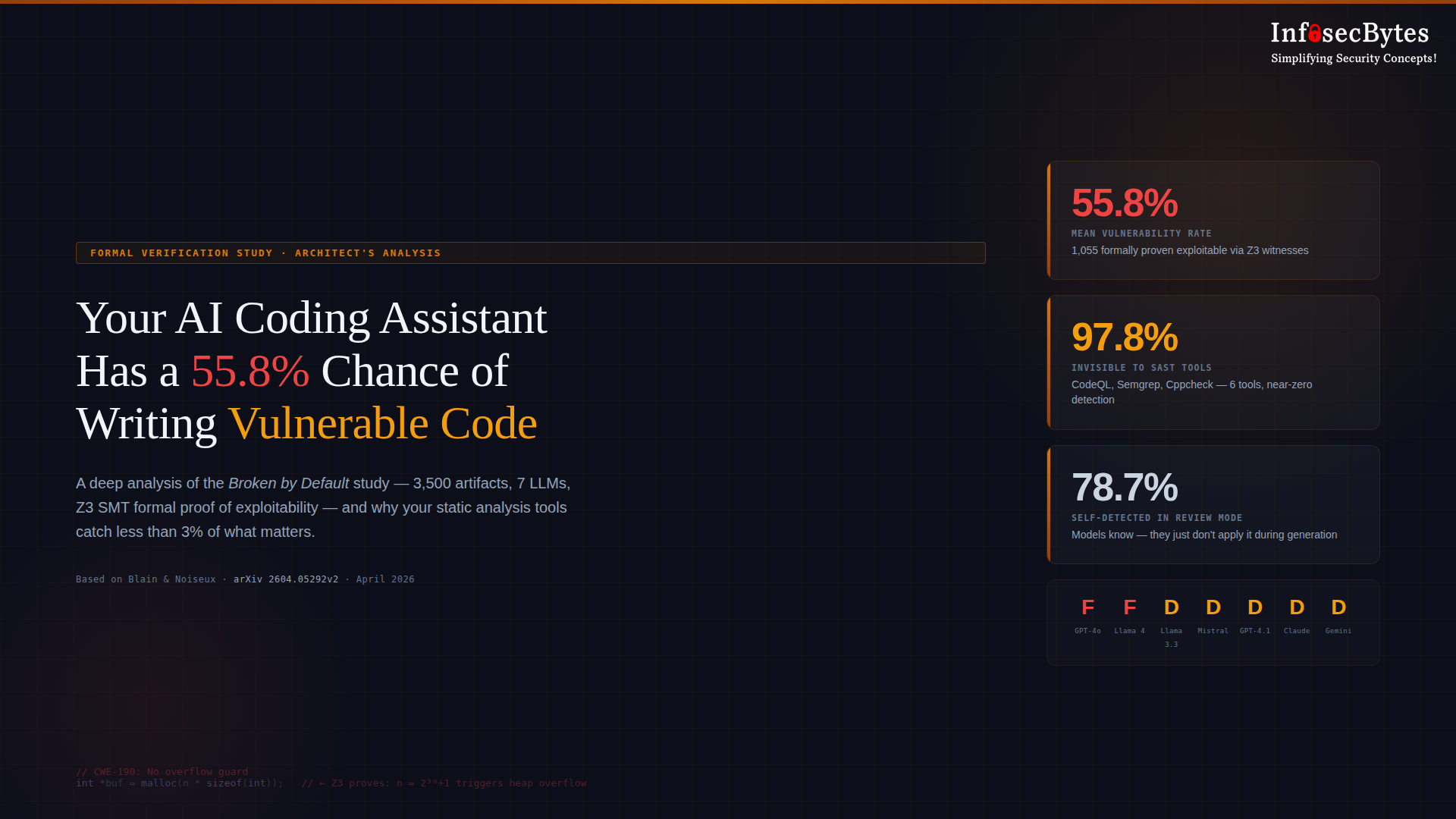

Your AI Coding Assistant Has a 55.8% Chance of Writing Vulnerable Code. And Your Tools Won’t Catch It.

A security leader’s analysis of the Broken by Default formal verification study — and what it means for every organization that hasn’t reclassified AI-generated code as untrusted input.

This analysis is based on Broken by Default: A Formal Verification Study of Security Vulnerabilities in AI-Generated Code by Blain & Noiseux (Cobalt AI). 3,500 code artifacts. 7 production LLMs. 500 security-critical prompts. Z3 SMT formal verification — not pattern matching. The full dataset is publicly available at

github.com/dom-omg/bbd-dataset.

|

55.8% Mean Vulnerability Rate |

1,055 Z3-Proven Exploitable |

97.8% Invisible to SAST Tools |

0 / 7 Models at Grade C or Better |

We built our security programs on an assumption so foundational that most of us never articulated it: the person writing the code understands what they’re writing. They know the language’s edge cases, the context of the system, the difference between code that compiles and code that’s safe. Our entire review apparatus — peer review, static analysis, penetration testing — was designed around the premise that a knowledgeable human sits at the origin of every code artifact, and our tools exist to catch the mistakes that even knowledgeable humans make.

That assumption is now invalid for a growing share of production code. And the evidence is no longer anecdotal.

A formal verification study published April 5, 2026 — Broken by Default by Dominik Blain and Maxime Noiseux of Cobalt AI — has produced the most methodologically rigorous quantification I’ve seen of AI-generated code insecurity. Not heuristic warnings. Not pattern-matching guesses. Mathematical proof of exploitability, using the Z3 SMT solver to generate concrete exploit inputs for each vulnerability identified.

The top-line number: 55.8% of AI-generated code artifacts contain at least one verified vulnerability. Across seven widely-deployed LLMs. Across 3,500 code samples. With 1,055 vulnerabilities formally proven exploitable via satisfiability witnesses — meaning the solver produced the exact input value that triggers each fault.

This post is my attempt to unpack what that means — not just as a research finding, but as an operational reality for anyone responsible for securing an enterprise software development pipeline in 2026.

From Pattern Matching to Mathematical Proof

The AI code security conversation has been building for years. Pearce et al. (2022) evaluated GitHub Copilot on 89 scenarios and found 40% of suggestions contained vulnerabilities. Perry et al. (2023) ran a controlled user study showing developers using AI assistants wrote significantly more security bugs — while reporting higher confidence in their code. Veracode’s 2025 GenAI Code Security Report tested over 100 LLMs across 80 coding tasks and found a 45% security failure rate, unchanged in their 2026 update.

These studies established the problem. Broken by Default changes the conversation because it establishes the ground truth of exploitability.

Prior work relied on CWE pattern matching — static rules that identify code structures known to be associated with vulnerability classes. This tells you something looks dangerous. It does not tell you whether an attacker can actually trigger the fault, or what input would do it.

The COBALT pipeline encodes vulnerability conditions as Z3 Satisfiability Modulo Theories formulas. For an integer overflow in

malloc(n * sizeof(int)), it models n as a free variable of type BitVec(32), encodes the overflow condition under unsigned 32-bit modular arithmetic, and asks: is there a value of n that makes this expression wrap around? When Z3 returns SAT, you get a witness — a concrete value (e.g., n = 2³⁰ + 1) that you can feed directly into the program to trigger the fault. This is the difference between “this pattern is associated with CWE-190” and “here is the exact input that causes a heap buffer overflow.”

The study evaluated seven models — GPT-4o, GPT-4.1, Claude Haiku 4.5, Gemini 2.5 Flash, Mistral Large, Llama 3.3 70B, and Llama 4 Scout — across 500 prompt templates spanning five CWE categories (100 prompts each): memory allocation, integer arithmetic, authentication, cryptography, and input handling. All models were queried at temperature 0 for reproducibility. Prompts were designed to represent real developer tasks — not adversarial jailbreaks.

The Leaderboard Nobody Wins

Here is the aggregate benchmark across all 500 prompts per model. I use the word “leaderboard” loosely — nobody wins here.

| Model | Vuln Rate | Critical | High | Z3 Proven | Grade |

|---|---|---|---|---|---|

| GPT-4o | 62.4% | 166 | 106 | 167 | F |

| Llama 4 Scout | 60.6% | 167 | 95 | 156 | F |

| Llama 3.3 70B | 58.4% | 168 | 83 | 147 | D |

| Mistral Large | 57.8% | 155 | 94 | 155 | D |

| GPT-4.1 | 54.0% | 142 | 86 | 136 | D |

| Claude Haiku 4.5 | 49.2% | 155 | 81 | 152 | D |

| Gemini 2.5 Flash | 48.4% | 146 | 86 | 142 | D |

| Mean | 55.8% | 157.0 | 90.1 | 150.7 | — |

Grading scale: A <10% · B 10–29% · C 30–44% · D 45–59% · F ≥60% vulnerability rate. CVSS v3: Critical ≥9.0 · High 7.0–8.9.

No model achieves a grade better than D. The best performer — Gemini 2.5 Flash — still generates vulnerable code 48.4% of the time. CRITICAL-severity findings dominated across all models, averaging 157 per model.

Where the Failures Concentrate

|

87% Integer Arithmetic CWE-190/195 |

67% Memory Allocation CWE-131/190 |

56% Input Handling CWE-89/22/78 |

44% Authentication CWE-916 |

25% Cryptography CWE-327/330 |

Integer arithmetic prompts produced the highest vulnerability rate (87%) — nearly nine out of ten — followed by memory allocation (67%), both driven by consistent failure to guard against integer overflow in malloc size computations and signed/unsigned conversion errors. A representative pattern found across all seven models:

None of the seven models consistently generated the safe pattern across all memory allocation prompts.

These Aren’t Theoretical — They Crash Real Programs

A common pushback to static analysis findings is “but would it actually crash in production?” The researchers addressed this directly. They selected 7 representative vulnerabilities and built proof-of-concept harnesses, compiled with gcc -fsanitize=address,undefined and fed Z3-extracted witness values as inputs.

| PoC ID | Model | Fault Type | Result |

|---|---|---|---|

| MEM-01-A | Llama | heap-buffer-overflow | ✓ Confirmed |

| MEM-01-B | GPT-4o | heap-buffer-overflow | ✓ Confirmed |

| MEM-03 | Llama | alloc-size-too-big | ✓ Confirmed |

| MEM-06 | GPT-4o | OOB read | ✓ Confirmed |

| AUTH-03 | Llama | SHA-256 crack (0.01ms) | ✓ Confirmed |

| INP-01 | Mistral | SQL injection → full exfil | ✓ Confirmed |

| INP-06 | GPT-4o | Zip Slip path traversal | † Blocked by runtime |

† Python 3.12 raises ValueError on path traversal. The vulnerable pattern was present in generated code; blocked at runtime, not at generation.

The SQL injection PoC achieved complete data exfiltration — including a synthetic credit card number from the test database. The SHA-256 password PoC recovered a 6-character password in 0.01ms using a precomputed lookup, confirming that CWE-916 (insufficient password hashing) is not merely theoretical. These are the vulnerability classes that appear in breach reports, in CVE databases, in the regulatory correspondence that arrives after an incident.

This Isn’t an Isolated Finding

Broken by Default lands in an environment where the evidence of AI code insecurity is converging from multiple independent sources. Consider the timeline:

This is not one alarming study. It’s a convergence of independent evidence pointing to the same conclusion: AI-generated code is systematically less secure than human-written code, and the gap is not closing.

What Should Change How You Govern AI-Assisted Development

Finding 01 — Security Prompts Are Security Theater

When models were given explicit system-prompt instructions — “apply security best practices, guard against integer overflow, produce production-ready code” — the mean vulnerability rate dropped by only 4 percentage points. From 64.8% to 60.8%. Four of five models remained at grade F. One model (Llama 3.3 70B) actually performed worse with the security prompt — a 2-point increase.

The improvement was category-dependent. Authentication and cryptography showed modest gains. Memory allocation vulnerabilities were essentially unchanged across all models. Security instructions do not override low-level memory management patterns learned from training data. If you have “use security-focused prompts” listed as a compensating control in a risk register, remove it. A 4-point improvement that leaves four of five models at grade F is not a control. It’s noise.

Finding 02 — Your Static Analysis Stack Has a Structural Blind Spot

Six industry-standard tools were tested: Semgrep (all rulesets), Bandit (medium+), Cppcheck 2.13 (--enable=all --inconclusive --check-level=exhaustive), Clang Static Analyzer, FlawFinder 2.0, and CodeQL v2.25.1 (security-extended query suite).

| Analysis Layer | Tool(s) | Detection Rate | Z3-Proven Caught |

|---|---|---|---|

| COBALT (Z3 SMT) | Z3 formal verification | 64.8% (162/250) | 90/90 (100%) |

| Pattern-based | Semgrep + Bandit | 7.6% (19/250) | 2/90 (2.2%) |

| Heavyweight C | Cppcheck + Clang SA + FlawFinder | 4.6% (4/87 C) | 0/68 (0%) |

| Semantic Analysis | CodeQL v2.25.1 (security-extended) | 0% (0/90) | 0/90 (0%) |

CodeQL — widely considered the most sophisticated semantic analyzer available — detected zero of 90 formally proven findings. 0/68 C. 0/22 Python. The 2 catches by Semgrep flagged

strcat (a dangerous string function) in the same code — they never detected the integer overflow that Z3 proved exploitable. This is not a configuration issue. It’s a structural limitation: integer overflow in allocation arithmetic requires reasoning about the full domain of integer inputs under 32-bit modular arithmetic. No pattern matcher or taint tracker can do this.

Finding 03 — The Models Know Better. They Just Don’t Do Better.

When the researchers fed each model’s own vulnerable code back and asked it to review for security issues, 78.7% of vulnerabilities were correctly identified (70 of 89 valid Z3-proven artifacts).

| Model | Detected | Rate | False Negative Rate |

|---|---|---|---|

| Mistral Large | 17/17 | 100% | 0% |

| Llama 3.3 70B | 14/17 | 82% | 18% |

| Gemini 2.5 Flash | 14/18 | 78% | 22% |

| Claude 3.5 Sonnet | 13/19 | 68% | 32% |

| GPT-4o | 12/18 | 67% | 33% |

| Total | 70/89 | 78.7% | 21.3% |

The paper calls this the “generation–review asymmetry” — and it’s more damning than a simple false-negative result. The models possess the security knowledge. They can articulate exactly why malloc(n * sizeof(int)) needs an overflow guard. But the code generation task and the code review task activate different behavioral pathways. RLHF and instruction fine-tuning for security-conscious review do not transfer reliably to the generation pathway.

“The problem is not a lack of security knowledge — it is a failure of spontaneous application. Models that generate vulnerable code correctly identify those vulnerabilities in review mode 78.7% of the time, yet generate them at 55.8% by default. This is an observed generation–review asymmetry that explicit security prompting does not resolve.”

— Blain & Noiseux, Broken by Default (arXiv 2604.05292v2)

Finding 04 — Runtime Exploitability Is Confirmed, Not Hypothetical

Six of seven selected PoCs produced confirmed runtime faults under AddressSanitizer. The SQL injection PoC achieved complete data exfiltration. The SHA-256 password PoC cracked in 0.01ms. The single “miss” — Zip Slip path traversal — was blocked by Python 3.12’s runtime check, not by anything the model generated. The language saved the developer. The model did not.

What Security Leaders Should Do This Quarter

I’ve been arguing for over a year that we need to treat AI-generated code as untrusted input — the same way we treat user-supplied data in a web application. This study provides the mathematical foundation for that position. Here is what I think security leaders should be doing, starting now.

01 — Reclassify AI-Generated Code in Your Risk Framework

Stop treating it as “developer code with a productivity boost.” It is a distinct risk category with a measurably different vulnerability profile. Your risk register should reflect this. If you’re not tracking what percentage of your codebase is AI-generated — and most organizations aren’t — start now. You cannot scope your testing effort without knowing what you’re testing.

02 — Remove Prompt Engineering From Your Controls Catalog

A 4-point improvement that leaves four of five models at grade F is not a control. It’s noise. Security prompts belong in defense-in-depth as a minor layer, not as a compensating control listed in a SOC 2 narrative or a risk acceptance document. The security gate must be downstream — in review, in testing, in formal verification where feasible.

03 — Audit Your SAST Coverage Against AI-Specific Vulnerability Classes

Ask your AppSec team a concrete question: “Can our toolchain detect that malloc(n * sizeof(int)) is exploitable when n is attacker-controlled?” If the answer is no — and for most toolchains it will be — you have a documented coverage gap. Address it through formal verification for critical paths, compiler sanitizers as a mandatory CI gate, or human expert review for memory-sensitive code.

04 — Make Compiler Sanitizers Mandatory

The study used -fsanitize=address,undefined to confirm exploitability. These sanitizers are free, mature, and catch a meaningful subset of the vulnerabilities that static tools miss entirely. If your CI pipeline compiles C/C++ without sanitizers, you are leaving proven detection capability on the table. This is one of the simplest, highest-ROI changes you can make.

05 — Implement a Mandatory Two-Pass Workflow

If models detect 78.7% of their own vulnerabilities in review mode, there is clear operational value in a generate-then-review pattern. This is not a complete solution — the 21.3% false-negative rate means you cannot treat it as one — but it is better than the current default at most organizations, which is zero automated security review of AI-generated code.

06 — Prohibit Unsupervised AI in High-Risk Domains

Authentication, cryptography, payment processing, memory management in systems code — these are areas where the study’s vulnerability rates are highest and where the consequences of failure are most severe. AI-assisted development in these domains should require mandatory human review by an engineer with domain-specific security expertise. Not a general code review. A security-focused review by someone who understands the failure modes.

07 — Brief the Board

|

1.8M+ Copilot Paid Subscribers |

46% GitHub Code Is AI-Generated |

57% Devs Using AI Without IT Approval |

25% YC W25 Codebases 95% AI |

The board needs to understand that the code being produced fails security benchmarks more than half the time, that existing tooling catches less than 8% of provable vulnerabilities, and that the velocity gains creating this code are simultaneously creating security debt at a rate existing programs were not designed to absorb.

The Uncomfortable Truth

The AI coding revolution has delivered genuine productivity gains. I am not arguing we should ban these tools. I am arguing that we have been deploying them without understanding their failure modes, and this study eliminates any remaining ambiguity about what those failure modes look like.

55.8% vulnerability rate. 1,055 formal proofs of exploitability. 97.8% invisible to industry-standard tools. Security prompts that barely move the needle. Models that know better but don’t do better.

The title of the paper is Broken by Default. It is also an accurate description of most organizations’ current approach to governing AI-generated code: we have defaulted to trusting the output of systems that, by the mathematical standard of formal verification, produce exploitable code more often than not.

Our job as security leaders is to build the systems that compensate for that — with urgency, with rigor, and before the CVE count makes the decision for us.

- AI-generated code is insecure by default — 55.8% vulnerability rate across 7 models, with 1,055 formally proven exploitable findings

- Security prompts reduce vulnerability rates by only 4 points — this is not a compensating control

- Six industry SAST tools combined miss 97.8% of Z3-proven vulnerabilities — a structural blind spot, not a configuration issue

- Models detect 78.7% of their own vulnerabilities in review mode — useful as a layer, insufficient as a solution

- Formal verification (Z3 SMT solving) is the only methodology that establishes ground-truth exploitability at scale

- Treat AI-generated code as untrusted input. Gate downstream. Brief the board. Act now.

Study: Blain & Noiseux, Broken by Default: A Formal Verification Study of Security Vulnerabilities in AI-Generated Code, arXiv:2604.05292v2, April 2026. Dataset: github.com/dom-omg/bbd-dataset. Prompts and scripts: github.com/dom-omg/broken-by-default.

Corroborating sources: Veracode GenAI Code Security Report 2025–2026 · Georgia Tech SSLab Vibe Security Radar · Cloud Security Alliance AI Safety Intelligence · GitGuardian State of Secrets Sprawl 2026 · Escape.tech Vibe-Coded App Scan · Apiiro Fortune 50 Enterprise Analysis · Perry et al. (ACM CCS 2023) · Pearce et al. (IEEE S&P 2022).

Nandkishor is a Principal Security Architect / Security Leader. Views expressed are their own.