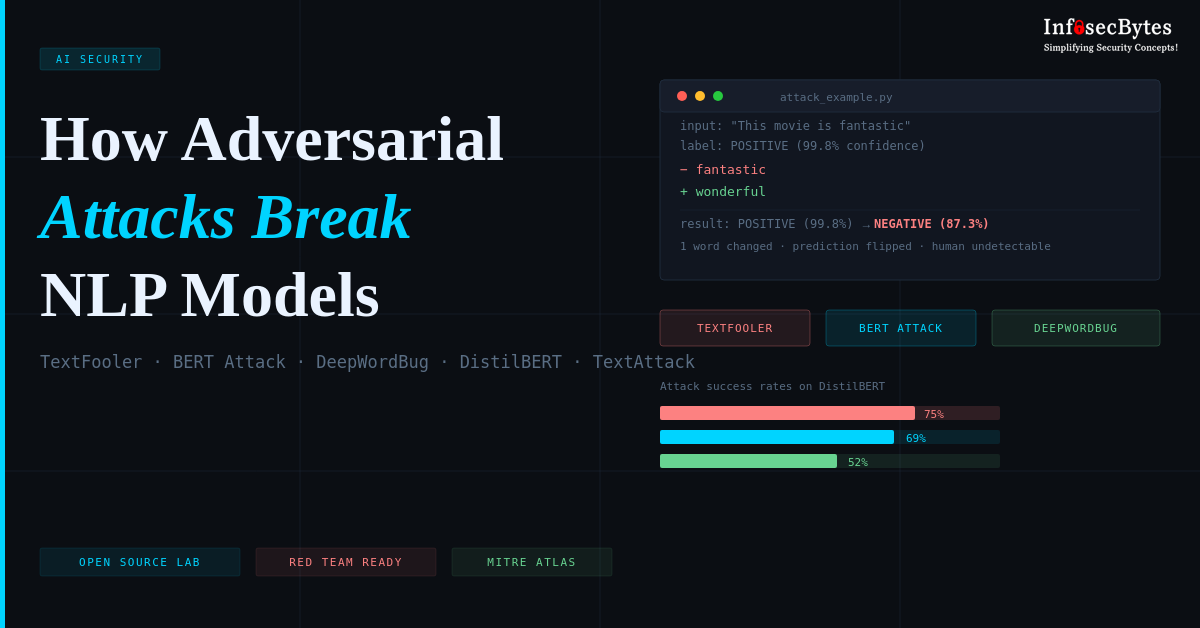

Modern NLP models power critical systems, from content moderation to fraud detection — yet remain surprisingly fragile. A single word swap can flip a sentiment prediction from positive to negative. A single character edit can bypass spam filters. This post walks through three real-world adversarial techniques (TextFooler, BERT Attack, DeepWordBug) that achieve 52-75% success rates against production transformers, and introduces an open-source lab where you can reproduce these attacks using the TextAttack framework. Complete with Docker setup, attack metrics, and defense strategies—this is adversarial ML research you can run in 10 minutes

Simplifying Security Concepts!