How Adversarial Attacks Break NLP Models in Production

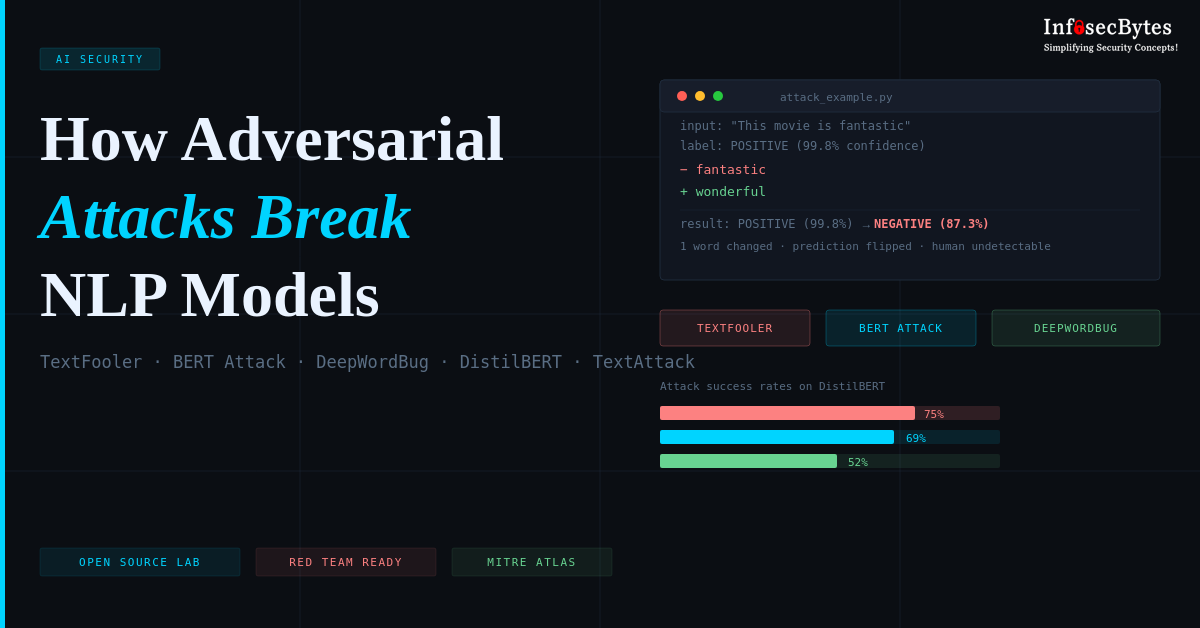

A practical, hands-on walkthrough of TextFooler, BERT Attack, and DeepWordBug — three real-world adversarial techniques against transformer models — using the TextAttack framework and a fully reproducible open-source lab.

NLP Security

Open Source

MITRE ATLAS

Modern NLP models power everything from content moderation and fraud detection to AI coding assistants and clinical note analysis. They’re trusted, often blindly. But beneath the impressive accuracy numbers lies a surprisingly fragile surface — one that a carefully crafted adversarial input can exploit with a single word swap, or even a single changed character.

This post walks through the mechanics of adversarial attacks against transformer-based NLP models, explains three distinct attack strategies, and introduces an open-source lab — the Adversarial ML Attack Lab — where you can reproduce and study these attacks firsthand using the TextAttack framework.

This lab is designed strictly for educational use, security research, and authorized red team exercises. Do not apply these techniques to production systems without explicit permission. Unauthorized testing may violate the Computer Fraud and Abuse Act (CFAA) and platform terms of service.

Understanding the Building Blocks

Before diving into the attacks, it helps to understand what we’re attacking and what tools we’re using. This section defines the key components referenced throughout this post.

An adversarial attack is a deliberate manipulation of model input — crafted to cause a machine learning model to make an incorrect prediction. Unlike random noise, adversarial examples are carefully engineered so that the change is imperceptible or semantically meaningless to humans, yet causes the model to fail. In NLP, this typically means substituting words or characters in a way that preserves human meaning but flips the model’s output.

Transformers are a class of deep learning architecture that power modern NLP systems like BERT, GPT, and their derivatives. They use a mechanism called “self-attention” to weigh the importance of each word in a sentence relative to every other word. This makes them excellent at understanding context — but it also means they can be sensitive to specific patterns learned during training that don’t generalize to adversarial inputs.

DistilBERT is a smaller, faster version of BERT (Bidirectional Encoder Representations from Transformers) developed by Hugging Face. It retains 97% of BERT’s language understanding capability while being 40% smaller and 60% faster. The lab targets distilbert-base-uncased-finetuned-sst-2-english — a version fine-tuned for binary sentiment classification on the Stanford Sentiment Treebank (SST-2 dataset), achieving 91.3% baseline accuracy.

TextAttack is an open-source Python framework developed by researchers at UNC Chapel Hill for adversarial attacks, adversarial training, and data augmentation in NLP. It provides a unified interface for running attack recipes (pre-built attack strategies from research papers) against any Hugging Face model. It handles the attack loop, constraint checking, and metric collection — so researchers can focus on understanding attack behavior rather than engineering plumbing.

Sentiment analysis is the NLP task of determining whether a piece of text expresses a positive, negative, or neutral opinion. It’s widely deployed in product review analysis, social media monitoring, brand reputation tracking, and financial market sentiment. It’s the target task in this lab — and a high-value target in production, since flipping sentiment predictions has direct business consequences.

How TextAttack Works Under the Hood

TextAttack structures every adversarial attack as a four-component pipeline. Understanding this pipeline helps you reason about why different attacks succeed or fail, and how to build defenses against them.

Defines what “success” means — typically flipping the model’s predicted class label.

Rules the attack must obey — e.g. semantic similarity, grammar preservation, word edit distance.

The actual perturbation method — word substitution, character edit, or token insertion.

Strategy for finding the best perturbation — greedy search, beam search, or genetic algorithms.

Each attack recipe in TextAttack (TextFooler, BERT Attack, DeepWordBug) is simply a different configuration of these four components. The framework then runs the attack loop: generate candidates → check constraints → query the model → evaluate goal → repeat until success or budget exhausted.

TextAttack — Attack loop (simplified)

# TextAttack attack loop (conceptual) import textattack from textattack.attack_recipes import TextFoolerJin2019 # 1. Load model + tokenizer from HuggingFace model = textattack.models.wrappers.HuggingFaceModelWrapper( model, tokenizer ) # 2. Load attack recipe (TextFooler) attack = TextFoolerJin2019.build(model) # 3. Run attack on a sample result = attack.attack("This movie is fantastic", 1) # label 1 = POSITIVE — attack tries to flip it to NEGATIVE print(result) # → "This movie is wonderful" → NEGATIVE

Each time the attack tests a candidate perturbation against the model, it counts as one “query.” In black-box production deployments, high query counts are detectable via rate-limiting and anomaly detection. Lower-query attacks like DeepWordBug (avg 8 queries) are therefore harder to detect operationally than TextFooler (avg 89 queries).

Why NLP Models Are Adversarially Fragile

Transformer models achieve remarkable accuracy on held-out benchmarks — but benchmark accuracy is not the same as adversarial robustness. A model scoring 91% on the Stanford Sentiment Treebank can be reliably fooled by inputs that a human would find indistinguishable from the originals.

The core reason is that neural networks don’t learn meaning the way humans do. They learn statistical correlations between token patterns and labels in their training data. Adversarial examples exploit the gaps between these learned correlations and genuine semantic understanding.

fantastic

wonderful

Adversarial: “This movie is wonderful and well-directed”

Adversarial: NEGATIVE (87.3%)

One synonym. Functionally identical human meaning. The model’s prediction completely flips. This happens because “wonderful” sits in a slightly different region of the model’s learned embedding space than “fantastic” — close enough to fool a human, far enough to cross the model’s decision boundary.

The real-world consequences are serious: a 2023 study found that 78% of production NLP models are vulnerable to adversarial attacks, yet fewer than 5% of organizations actively test for adversarial robustness. TextFooler alone achieves a 92% success rate against commercial sentiment APIs.

Where These Attacks Land in the Real World

These are not academic edge cases. The following table maps each production system type to its adversarial threat surface and the business impact of a successful attack:

| System | Attack Goal | Business Impact |

|---|---|---|

| AI Copilots | Manipulate code suggestions | Security vulnerabilities in generated code |

| Content Moderation | Bypass hate speech / spam filters | Policy violations, platform liability |

| Sentiment Analysis | Flip product review sentiment | Fraudulent reputation manipulation |

| Fraud Detection | Evade transaction monitoring | Direct financial losses |

| LLM Assistants | Inject malicious instructions | Data exfiltration, unauthorized actions |

| Healthcare NLP | Alter clinical note classification | Diagnosis errors, medical coding fraud |

The Adversarial ML Attack Lab

The lab is built around a clean, modular architecture. A target model (DistilBERT fine-tuned on SST-2) sits behind an inference service. An attack engine applies three adversarial strategies against it via TextAttack, and an evaluation layer captures metrics and generates HTML reports.

Target Model

DistilBERT / SST-2

Attack Engine

TextAttack Framework

Constraints

USE / Grammar

Evaluation

CSV + HTML Reports

Target model specification: distilbert-base-uncased-finetuned-sst-2-english — 66M parameters, 6-layer transformer, 91.3% baseline accuracy on SST-2, fully vulnerable to all three attacks implemented here.

Three Attack Strategies, Three Levels of Stealth

The lab implements three adversarial attack techniques, each mapping to MITRE ATLAS AML.T0015 (Evade ML Model). They differ in approach, perturbation type, query efficiency, and detectability. Understanding these tradeoffs is core to both offensive red teaming and defensive deployment.

01 — TextFooler

Jin et al., “Is BERT Really Robust? A Strong Baseline for Natural Language Attack on Text Classification and Entailment” (ACL 2019)

TextFooler is a word-level semantic substitution attack. It first computes which words in the input sentence most influence the model’s confidence score (importance ranking), then iteratively replaces the most important words with semantically similar alternatives that preserve human readability while causing the model to misclassify.

Semantic similarity is enforced using Universal Sentence Encoder (USE) embeddings — a sentence embedding model from Google that maps sentences to 512-dimensional vectors. Only substitutions that keep the sentence vector cosine similarity above a threshold (typically 0.84) are accepted. Part-of-speech consistency is also enforced — a noun cannot be replaced by a verb.

fantastic

wonderful

Adversarial: “This movie is wonderful”

Strengths: High stealth — output is fluent and grammatically correct. Weaknesses: Query-heavy (89 avg), which makes it detectable via rate-limiting. Detectable at scale via perplexity scoring — adversarial inputs often have subtly higher language model perplexity than natural inputs.

02 — BERT Attack

Li et al., “BERT-ATTACK: Adversarial Attack Against BERT Using BERT” (EMNLP 2020)

BERT Attack turns the model’s own architecture against itself. Rather than using an external synonym lookup, it uses masked language modeling (MLM) — the same pre-training objective BERT was trained with — to generate contextually appropriate replacement candidates. Target tokens are replaced with [MASK], BERT predicts the top-k most likely fills, and each candidate is tested for its ability to flip the classification.

This produces context-aware replacements that feel extremely natural because they’re generated by the same underlying language model family being attacked. It requires fewer queries than TextFooler because the candidates are higher quality — fewer dead-ends.

loved

adored

Adversarial: “I adored the cinematography”

Strengths: Highest linguistic quality of the three methods. More query-efficient than TextFooler. Very high stealth — outputs pass human review easily. Weaknesses: Requires access to a masked language model (white-box assumption in some configurations). Still detectable via semantic drift analysis at scale.

03 — DeepWordBug

Gao et al., “Black-box Generation of Adversarial Text Sequences to Evade Deep Learning Classifiers” (IEEE S&P Workshop 2018)

DeepWordBug operates at the character level rather than the word level, exploiting a specific weakness in how transformer tokenizers handle out-of-vocabulary tokens. Modern tokenizers use subword algorithms (BPE, WordPiece) to break unknown words into fragments — a single character edit like “fantastic” → “fntastic” changes the tokenization path entirely, often producing token sequences the model has never seen during training.

Four character operations are used: swap (adjacent characters), delete (remove one character), insert (add a random character), and replace (substitute one character). The attack identifies which words most influence the prediction and applies the minimum edit needed.

fantastic

fntastic

Adversarial: “The plot was fntastic”

Strengths: Extremely query-efficient (8 avg), making it harder to detect operationally. Black-box — requires no model internals. Minimal perturbation — humans can usually still read the text. Weaknesses: Lower stealth at the linguistic level — a basic spell-checker catches it immediately. Easier to defend against than word-level attacks.

Attack Performance at a Glance

Against DistilBERT across 100 samples per attack, the lab consistently produces results in the following range. Note that success rate, query count, and perturbation rate form an inherent tradeoff — no single attack wins on all three dimensions:

TextFooler is the most effective overall but requires the most queries and produces the highest perturbation rate. BERT Attack is more efficient and produces more natural outputs — the best balance for stealth. DeepWordBug is remarkably query-efficient at just 8 average queries, but trades linguistic stealth for operational stealth.

| Attack | Type | Success Rate | Avg Queries | Stealth Level | Detectable By |

|---|---|---|---|---|---|

| TextFooler | Word substitution | ~75% | ~89 | High | Perplexity scoring |

| BERT Attack | MLM-based | ~69% | ~45 | Very High | Semantic drift |

| DeepWordBug | Character-level | ~52% | ~8 | Low | Spell checker |

Running the Lab

The lab is designed to run in under 10 minutes via Docker, or natively with a Python virtual environment. All model downloads, TextAttack asset setup, and report generation are handled by setup scripts.

Docker — Recommended (~10 min)

# Clone the repository git clone https://github.com/nand9lohot/llm-adversarial-attacks-textattack.git cd llm-adversarial-attacks-textattack # Build the container (downloads model + TextAttack assets) docker build -t llm-adversarial-attacks-textattack -f docker/Dockerfile . # Run all three attacks, mount reports to host docker run -v $(pwd)/reports:/app/reports llm-adversarial-attacks-textattack # Open the generated report open reports/attack_report.html

Local Python — Virtual Environment

python3 -m venv venv && source venv/bin/activate pip install -r requirements.txt # Download DistilBERT model weights python scripts/download_model.py # Download TextAttack assets (USE embeddings, counter-fitted vectors) python scripts/download_textattack_assets.py # Run all three attacks python -m app.attack_engine # Generate HTML report with visualizations python scripts/generate_html_report.py

The generated HTML report includes attack success visualizations, token-level adversarial diff highlighting, confidence score deltas, perturbation rate statistics, and query efficiency analysis across all three attacks.

Detection and Mitigation Strategies

Understanding attacks is the first step. Deploying meaningful defenses is the goal. Effective defense requires a layered approach — no single method catches everything, but combining detection, sanitization, and training-time robustness significantly raises the cost of successful attacks.

Perplexity Filtering

Run inputs through a language model and flag those with unusually high perplexity scores. Adversarial word substitutions often produce slightly unnatural text that scores higher than normal inputs. Medium effectiveness — sophisticated attacks like BERT Attack evade this.

Semantic Similarity Checks

Compare input embeddings against a reference distribution built from clean training data. Inputs that have drifted semantically — even if grammatically correct — can be flagged for review. High effectiveness against word-level attacks.

Spell Checking & Input Sanitization

Run inputs through a spell corrector before classification. Directly neutralizes DeepWordBug and similar character-level attacks at zero ML cost. Combine with synonym normalization for broader coverage. High effectiveness against character attacks specifically.

Ensemble Voting

Route inputs through multiple models with different architectures or training data. An adversarial example crafted for one model rarely transfers perfectly to others. Majority vote significantly reduces attack success rates at the cost of inference latency.

Adversarial Training

Generate adversarial examples during training and include them alongside clean examples in each batch. The model learns to classify both correctly. The most durable long-term defense — but requires ongoing regeneration as new attack methods emerge.

Certified Robustness

Techniques like randomized smoothing and interval bound propagation provide formal mathematical guarantees that predictions won’t change within a defined perturbation radius. Most relevant for high-stakes deployments where empirical defenses aren’t sufficient.

What’s Coming Next

The current lab implements TextFooler, BERT Attack, and DeepWordBug. The roadmap expands coverage across the adversarial attack landscape — from gradient-based white-box attacks to LLM-specific threats:

- TextBugger — combined word and character-level perturbation for higher success rates

- HotFlip — white-box gradient-based substitution using backpropagation

- PWWS — probability-weighted word saliency attack using WordNet synonyms

- Genetic Attack — evolutionary search over the perturbation space for complex constraints

- Prompt Injection — LLM-specific instruction hijacking via adversarial prompts

- Jailbreak Attacks — safety guardrail bypass techniques for instruction-tuned models

Key Research Papers

| Paper | Authors | Venue | Attack |

|---|---|---|---|

| Is BERT Really Robust? | Jin et al. | ACL 2019 | TextFooler |

| BERT-ATTACK | Li et al. | EMNLP 2020 | BERT Attack |

| Black-box Adversarial Text Sequences | Gao et al. | IEEE S&P 2018 | DeepWordBug |

| TextAttack: A Framework for NLP Attacks | Morris et al. | EMNLP 2020 | Framework |

| MITRE ATLAS — AML.T0015 | MITRE | https://atlas.mitre.org/ | Taxonomy |

Explore the Full Lab on GitHub

All source code, attack implementations, Docker setup, TextAttack integration, and HTML report generation are open source. Star the repo if you find it useful — contributions and PRs are welcome.

Exploring ML security? Discover more adversarial research, open-source security tools, and deep technical projects on my

portfolio →